PID Control

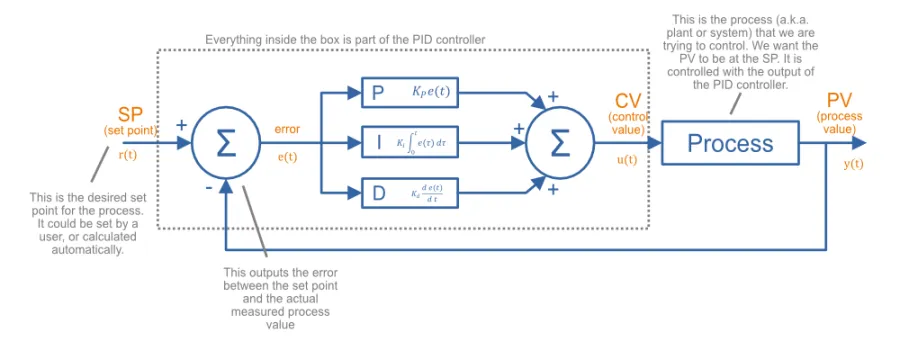

PID control (a.k.a. three-term control1) is a control technique using feedback that is very common in industrial applications. It is a good technique to use when you have a desired set-point for a process (system) to be in, and are able to control this with one variable. PID is an acronym for Proportional-Derivative-Integral, and describes the three mathematical processes used to calculate the output needed to control the process.

There are a few different PID topologies, including:

- Parallel form: Also known as ideal form or non-interacting. Easiest to understand, common in academia.

- Standard form: Coefficients have better intuitive meaning attached to them (and hence easier to tune), topology is common in industry.

These topologies are described in more detail in the following sections.

Common Terminology

No matter the topology, the goal of a PID controller is to control a process, given a single, measured process value ( or PV) that you want controlled (e.g. temperature of the heater) and a single variable you can adjust called the control value ( or CV) (e.g. voltage you can apply to the heaters coils). The output of the PID controller (which is the control value) is sometimes called .

The state you want to get the process to is called the set-point ( or SP). The set-point has the same units as the process value. The units of the control value is whatever is used to control the process.

The error () is always defined as the set-point minus the process value, so the error is positive when the actual value is less than what it needs to be. It is defined by the following equation:

where:

is the error

is the set-point (SP)

is the process value (PV)

and everything else as previously mentioned

PID in Parallel Form

Inside a PID controller in parallel form (a.k.a. ideal form, non-interacting1), the error is separately fed into the P (proportional), I (integral) and D (derivative) blocks of the PID controller. These blocks act on the error in different ways, and their output is summed together to generate the control value. The way these P, I and D blocks work is explained below.

This is called the parallel form because if you draw it as a block diagram, the proportional, integral and derivative terms all act in parallel to one another. The parallel form is easy to understand, but not so intuitive to tune3. Many academic related material will only mention this parallel form.

The governing equation of a PID controller in parallel form is1 3:

where:

is the calculated control value (CV)

is the error

is the current time

is the variable of integration (takes on values from time=0 to time=t)

is the proportional gain

is the integral gain

is the derivative gain

This can also be written in the Laplace domain as a transfer function1:

This PID equation is in the continuous time domain. However, nowadays most PID control loops are implemented digitally. The discrete equation is written:

where:

is the time period between samples

This equation is suitable for implementing in code. There also exists the Z transformation of the equation, but this obscures the physical meaning of the parameters used in the controller.

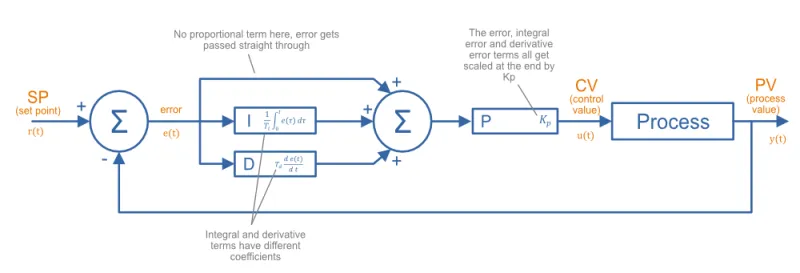

PID in Standard Form

A PID controller in standard form (a.k.a. ISA standard form4 5) is more common in industry than in parallel form6. The factor is not just applied to the proportional term but instead brought out and applied at the end to all three factors (so it can be thought of as a scaling factor). Also, the factors that influence the integral and derivative terms now have better intuitive meaning.

The governing equation of a PID controller in standard form is1:

where:

is the integral time

is the derivative time

The integral time refers to a scenario in where the error starts at 0, and then jumps to some fixed value. The proportional term will provide an immediate and fixed response, whilst the integral term will begin at 0 and slowly accumulate. The integral time is the amount of time it takes for the integral term to equal the proportional term.

The derivative time refers to a scenario where the error begins increasing at a fixed rate. The proportional term will start at 0 and begin to increase, whilst the derivative term will provide a fixed response. The derivative time is the amount of time for the proportional term to equal the derivative term.

The parameters and in parallel form are related to the parameters and in standard form by:

PID In Series Form

The governing equation of a PID controller in series form is7:

The proportional gain effects P, I and D actions just as with standard form, however the new difference is that with the integral and derivative constants also affect the P action. This form is an artifact from the days of simpler, analogue and mechanical based PID controllers, in where the physical design of the controller was made easier if the equation was structured this way7. I have never seen this implemented on a new design with current digital technologies (e.g. microcontrollers and PLCs).

Gain vs. Bands

Some PID controllers work on absolute inputs and outputs (e.g. temperature in °C for the PV and SP, and power output to heater in Watts as the CV), whilst other PID controllers use inputs/outputs that represent the percentage of total range (i.e. their units are percent). One benefit of using percentages is that the PID loops can require less tuning adjustment when transferring to a different system, as the percentage-based inputs/outputs scale proportionally with the changing ranges of the system.

The band is also called the throttling range.

Commonly referred to as the throttling range (TR), proportional band is defined as the amount of change in the controlled variable required to drive the loop output from 0 to 100%.

For example, if the proportional band (PB) is 20%, then the proportional gain (G) is 5.

Tuning Methods

TODO: Add more tuning methods.

Manual Tuning

One method to perform manual tuning with a PID controller in parallel form is8:

-

Set the system to toggle slowly between two set points (with ample time between the changes to settle).

-

Set all gains to .

-

Increase until the process continually oscillates in response to a disturbance.

-

Increase until the oscillations go away.

-

Repeat steps 3-4 until increasing does not make the oscillations go away.

-

Set and to the last known stable values.

-

Increase until it removes the steady-state error in an appropriate amount of time.

Integral Windup

Integral windup is a common problem with PID controllers. It is when a sudden change in the set-point or large disturbance on the output (really anything that causes a large error between where you are and where you want to be), causes the integral term to build up (remember that the integral term accumulates errors). Once you have reached where you want to be, the integral term has to “unwind”, and will drive the output past the setpoint until the error-time product is unwound.

Ways of preventing integral windup:

Limit Integral Effort To Fixed Values

This is the simplest form of preventing integral windup. Windup is preventing by limiting the integral effort to fixed maximum/minimum values. If the integral efforts begins to exceed the set max/min values, it is limited to these max/min values. Note that the integral effort is not reset. This is a common point of confusion, as sometimes the process of preventing integral windup is called “resetting”.

Limit Integral Effort Based On Output Limits

If output limits exist (they should for most real-world processes), the integral effort can be limited when the output hits it’s limits.

For example, say the P, I and D terms were contributing the following effort to the output:

- P = 6

- I = 5

- D = 2

Lets pretend the output is limited to 10. P + I + D = 13, so the output is going to be saturated. The I effort is likely to be even larger next iteration (assuming we haven’t reached our set-point yet) and so the output will saturate even more. To prevent this, we could limit the I value so that the sum of all three just hits the saturation limit, e.g. I would be set to 2.

Be careful not to set the integral term to a negative value! This could potentially occur if the P + D term were already larger than the saturated value.

Getting Rid Of Derivative Kick

Derivative Kick is the name for large output (CV) swings when a step-change in the set point (SP) occurs. When the set point changes abruptedly (you want the temperature of the room to be 20°C, but now someone else has come along and set it 25°C), the derivative of error is mathematically infinite! In a discrete, sampled based system this essentially results in a really large number. Remembering that the derivative term is:

this results in a huge spike (kick) in the output (CV). Luckily, when implementing a PID controller, there is an easy way to fix this. Remember that the error is:

If we take the derivative of this:

The trick is to now assume the SP is constant. If the SP is constant, then the derivative of a constant is just 0. Thus:

So for the derivative term, rather than calculating the change in error as the change in SP - PV, we just use the negative change in PV. In code, this would look like:

delta_error = new_error - last_error # Don't do this, results in derivative kickdelta_error = new_PV - last_PV # Do this instead! No derivative kick with change in set pint!d_term = delta_error / delta_timeThis gets rid of derivative kick in the PID controller when the set point changes abruptedly.

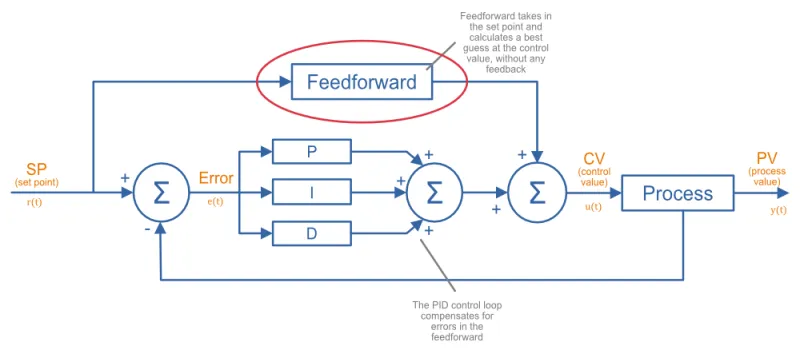

Feedforward

In the context of PID control, feedforward is the act of computing a best guess at the needed control value from measured process variables, and using this information to assist the PID control. Generally you are making predictions on what the control value will need to be based of physical laws and knowledge of how the system works. PID without feedforward does not predict what is going to happen before it happens --- it only operates on the error once it has occurred. Feedforward can help by providing some prediction of what is going to happen, before it happens (proactively)9.

In typical PID controllers with feedforward, a large proportion of the control value is actually generated by the feedforward, and only a small amount by the PID loop, which aims to correct for any inaccuracies in the feedforward model of the system.

Feedforward control can be difficult to implement when the process behaviour is not well understood, or it’s hard to measure the variables which disturb the process. In many cases, when basic PID control alone is sufficient, adding feedforward is not worth the extra complexity.

Worked Example Using The Parallel Form

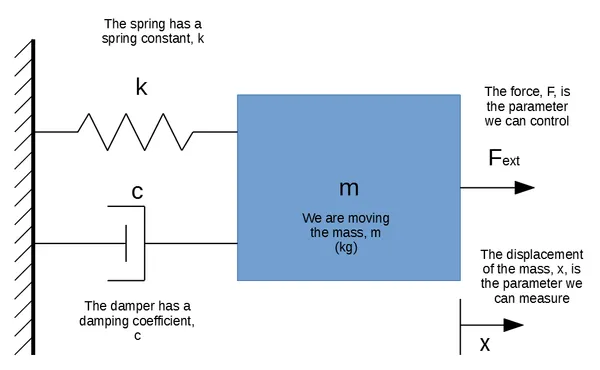

Lets assume a mass/spring/damper process (aka plant or system) which consists of a mass attached to a fixed wall by spring and damper. We want to control the position of the mass, relative to it’s resting point (which will be when the spring exerts no force). We can control the mass by applying an external force to the mass, .

The Physics

The mass is . The spring has a spring constant, , which is . The damping coefficient is .

We can model the system using Newton’s equation:

Summing up the forces on the mass gives:

Substituting in the equations for the spring and damper give:

where:

is the displacement

is the first derivative of (i.e. velocity)

is the second derivative of (i.e. acceleration)

We can simulate this system in code by discretizing the system into small time steps, and assume linear values for the acceleration and velocity over these small time steps.

How The Simulation Works

-

Assume starting conditions of displacement, velocity and acceleration are 0. is the control variable (set by the PID controller).

-

Determine a suitably small time step, . Then for each time step:

-

Calculate the force exerted by the spring and damper.

-

Determine the output of the PID controller , providing it the setpoint and “measured” displacement (which starts at 0, and then gets re-calculated at each time step as below).

-

Calculate the acceleration for this time step:

-

Calculate the change in velocity and displacement for this time step:

Note that these are changes in velocity and displacement, so to calculate the current velocity and displacement you add this change to the stored velocity/displacement state.

-

Once the values for the velocity and displacement are updated, repeat steps 3-5 for the next time step. and will calculated for the next time step using these updated velocity and displacement values.

Running The Simulation

We’ll apply a step change at , changing the setpoint to . We’ll use a time step of . We’ll use the parallel form of PID controller:

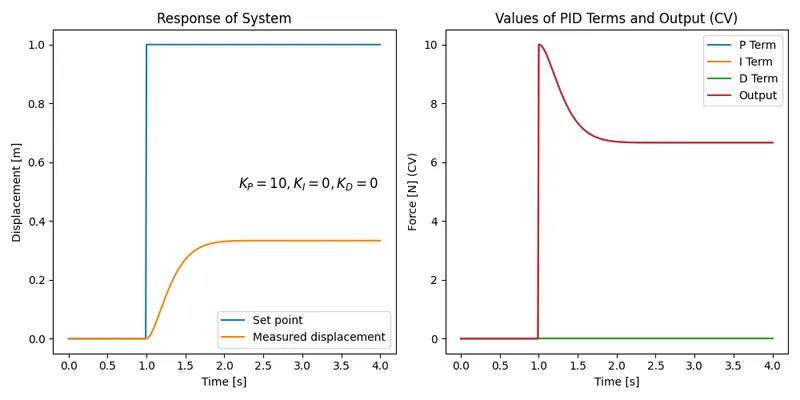

Let’s begin by just adding some proportional gain. Let’s set :

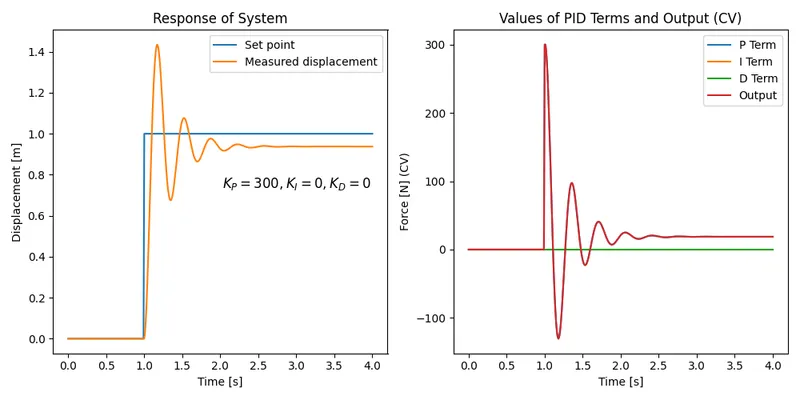

You can clearly see that this PID controller does not work very well. It only moves the block to about and then stops. Let’s bump up the proportional gain to see if we can reduce the error further:

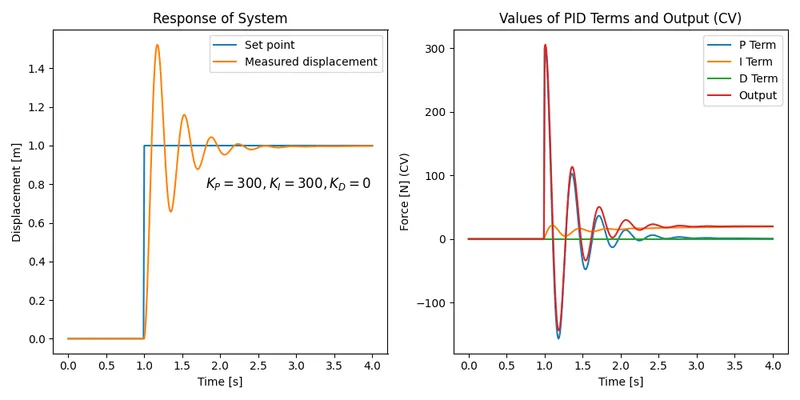

It’s better, but we still have significant steady-state error, and we’ve now got overshoot. Steady-state error is almost unavoidable with only pure proportional control. Integral control is perfect at fixing steady-state error, so let’s add some. We’ll fix the overshoot later:

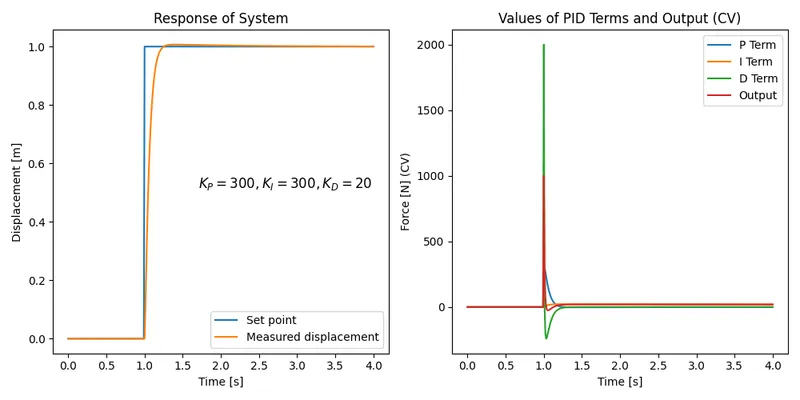

Getting better! You can see the effect of adding the integral control, the steady state error is now eventually removed. Now let’s see if we can get rid of the overshoot by adding some derivative control:

Now we are looking pretty good! We’ve completely removed the oscillations at the process reaches the setpoint pretty quickly. Note though that the derivative terms introduces a huge “kick” when the step change happens (this makes sense, because at that point there is a huge change in error!). This may saturate the actual thing connected to the control output (e.g. a linear motor which provides the external force), and control value limiting may need to be added to the PID controller.

If you are interested in the Python code that was used to perform this simulation, see here.

Firmware Modules

If you are looking for PID code for an embedded system, check out my Pid project on GitHub (CP3id). It is written in C++ and designed to be portable enough to run on many embedded systems, as well as Linux.

More Resources

Improving The Beginners PID: Introduction is a great set of articles explaining PID control loops and use Arduino code examples to supplement the explanations.

Practical Process Control: Proven Methods And Best Practices For Automated Process Control has lots of useful information on PID.

Implementing PID Controllers with Python Yield Statement has some interesting examples on using the yield statement in Python to help create a PID controller.

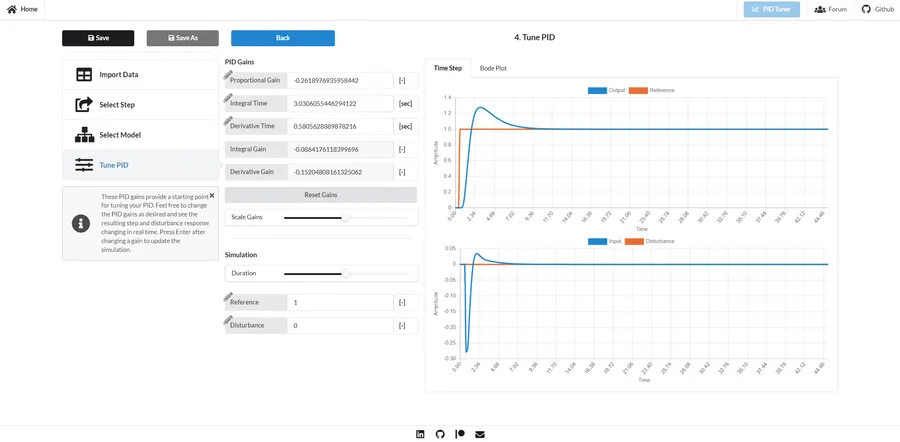

pidtuner.com is a free, open-source PID tuning web application. It works by allowing you to import measured output/input data, then select a single step change within the data, apply a mathematical model to the system, and then play around with P, I and D coefficients and see how the system responds10.

References

Footnotes

-

Wikipedia (2022, Mar 30). PID controller. Retrieved 2022-05-07, from https://en.wikipedia.org/wiki/PID_controller. ↩ ↩2 ↩3 ↩4 ↩5

-

University of Michigan - Control Tutorials For MATLAB and SIMULINK. Introduction: PID Controller Design. Retrieved 2023-06-24, from https://ctms.engin.umich.edu/CTMS/index.php?example=Introduction§ion=ControlPID. ↩

-

Jacques Smuts (2010, Mar 30). PID Controller Algorithms [Blog Post]. Retrieved 2023-06-23, from https://blog.opticontrols.com/archives/124. ↩ ↩2

-

Control (2014, Oct 27). PID Form Trick or Treat Tips [Blog Post]. Retrieved 2023-06-23, from https://www.controlglobal.com/home/blog/11328993/pid-form-trick-or-treat-tips. ↩

-

Vance Vandoren (2016, Jul 26). Understanding PID control and loop tuning fundamentals [Web Page]. Control Engineering. Retrieved 2023-06-23, from https://www.controleng.com/articles/understanding-pid-control-and-loop-tuning-fundamentals/. ↩

-

Wilderness Labs. Standard PID Algorithm - Understanding the real-world PID algorithm [Web Page]. Retrieved 2023-06-23, from http://developer.wildernesslabs.co/Hardware/Reference/Algorithms/Proportional_Integral_Derivative/Standard_PID_Algorithm/. ↩

-

Tony R. Kuphaldt. PID Controllers : Parallel, Ideal & Series [Web Page]. Instrumentation Tools. Retrieved 2023-06-24, from https://instrumentationtools.com/pid-controllers/. ↩ ↩2

-

hauptmech (2012, Oct 27). What are good strategies for tuning PID loops? [Forum Post]. Stack Exchange - Robotics. Retrieved 2023-06-24, from https://robotics.stackexchange.com/questions/167/what-are-good-strategies-for-tuning-pid-loops. ↩

-

Pramit Patodi (2020, Jul 14). Benefits in feedforward in PID controllers [Blog Post]. IncaTools. Retrieved 2023-06-22, from http://blog.incatools.com/benefits-feedforward-pid-controllers. ↩

-

pidtuner.com. Tune your PID - It has never been easier [Web Page]. Retrieved 2023-06-27, from https://pidtuner.com/#/. ↩ ↩2