Basic Signal Types

This page serves to be a tutorial on the basic types of signals/waveforms you will come across in engineering.

All usages of trigonometric functions such as and below assume angles measured in radians, not degrees.

This page assumes basic understanding of trigonometry and complex numbers.

Real Sinusoidal Signals

A real sinusoidal signal has the general form:

where:

, , and are real numbers

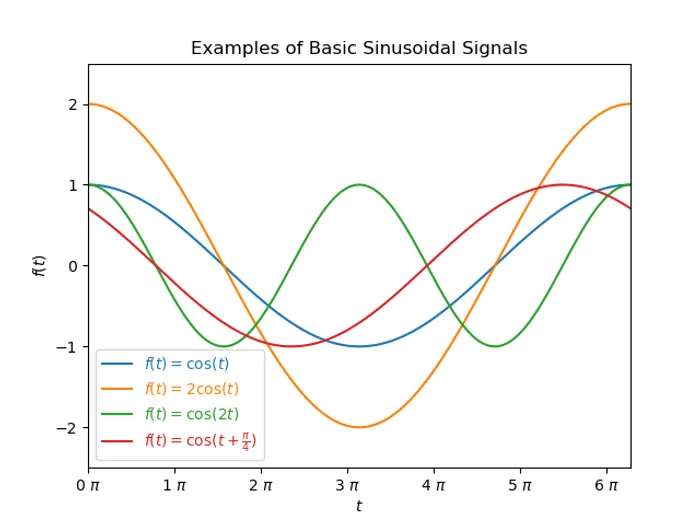

An example sinusoidal where is shown below:

controls the amplitude of the signal. A negative number will invert the signal. A value of will give an amplitude of .

controls the period/frequency of the signal. A value of gives a period of . Doubling doubles the frequency.

controls the offset of the signal along the x-axis. A positive value shift the signal to the left, a negative value shifts the signal to the right.

Sinusoidal signals normally arise in systems that conserve energy, such as an ideal mass-spring system (no damper or frictional forces!), or an ideal LC circuit (no resistive losses).

Complex sinusoidal signals are introduced after exponential signals are explained.

Exponential Signals

The general form for an exponential signal is:

where:

, are complex numbers

Real Exponential Signals

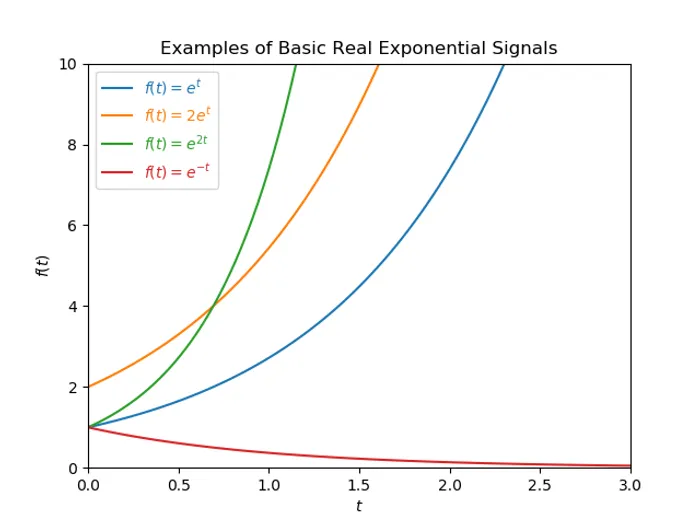

Below are some examples of real exponential signals, showing how varying and (limited to real numbers only) effect the waveform shape.

When , the signal exhibits exponential growth (this typically represents an unstable signal). When , the signal exhibits exponential decay (this typically represents a stable signal).

Complex Exponential Signals

If the restriction that and must be real numbers is removed from the general form for an exponential signal, and they are now complex numbers, a different class of signals arise. We substitute in the general form for a exponential signal for the complex number to give:

where:

is the attenuation constant

is the angular frequency

Recap Euler’s formula:

Also,

This allows us to separate out the real and imaginary parts into individual terms:

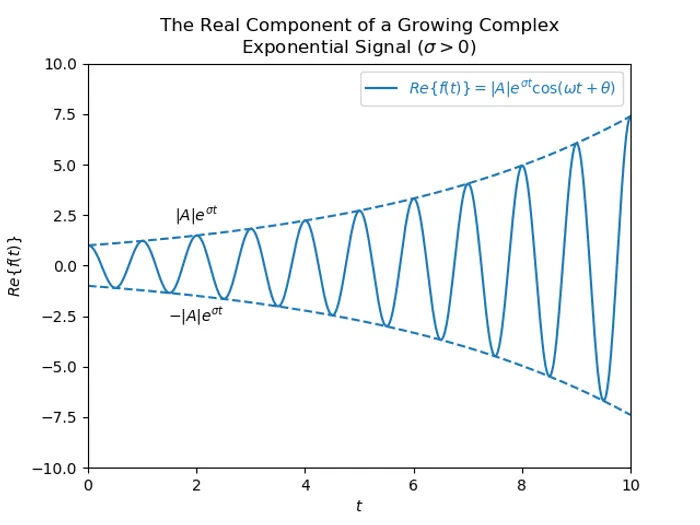

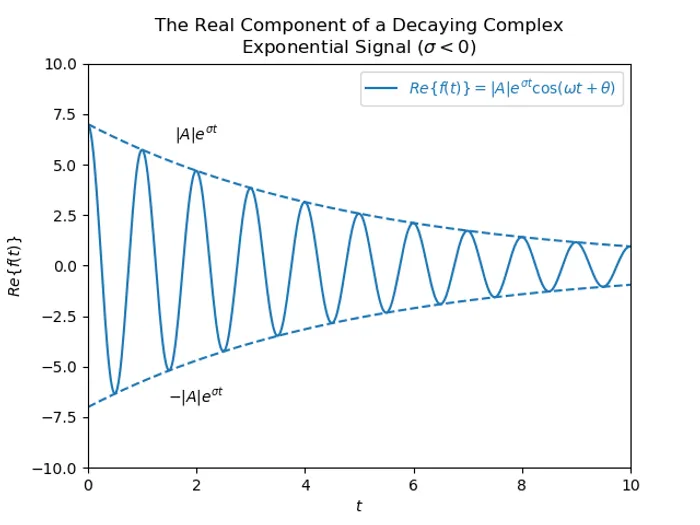

Below are graphs of the real component of both growing and decaying complex exponential signals. You can see how the signal is enveloped by . This is because the signal is the product of an exponential component and a component, and the component always varies between and .

Heaviside (Unit-Step) Function

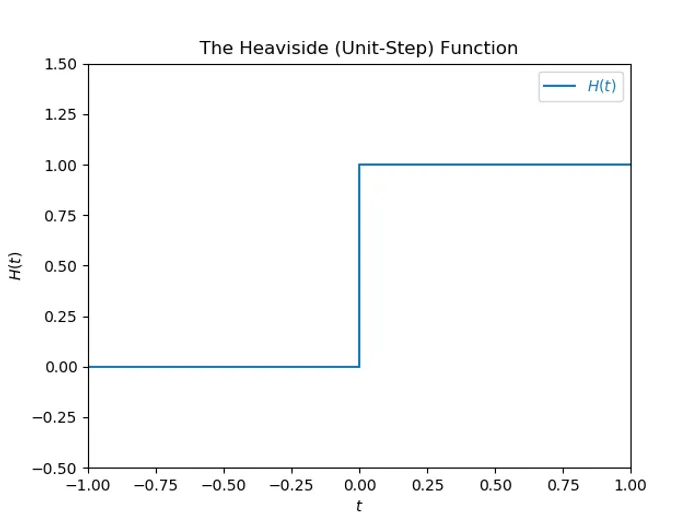

The Heaviside function, named after Oliver Heaviside (also known as the unit-step function) can be defined as:

The Heaviside function looks something like the signal below, however the point at can be drawn in different ways (more on that below):

Oliver Heaviside used his equation to calculate the current in an electrical circuit when it is first switched on.

The Different Conventions For H(0)

The value of Heaviside function at 0 ( at ) depends on the implementation or use of the function. The different values are described below. However, it is worth noting that the value of is of no importance for most use cases of the Heaviside function, as it is commonly used as a windowing function in integration (or distribution).

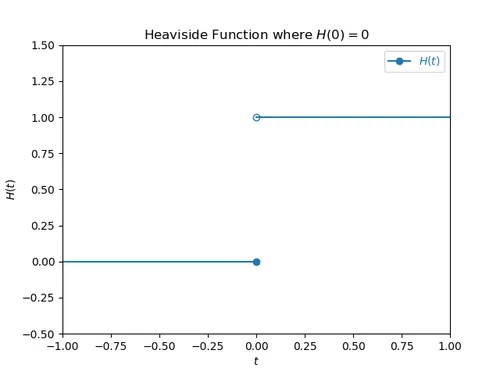

H(0) = 0

This is how the NIST DLMF (Digital Library of Mathematical Functions) defines the Heaviside function (see section 1.16.13). This version of the Heaviside function is left-continuous at but not right continuous.

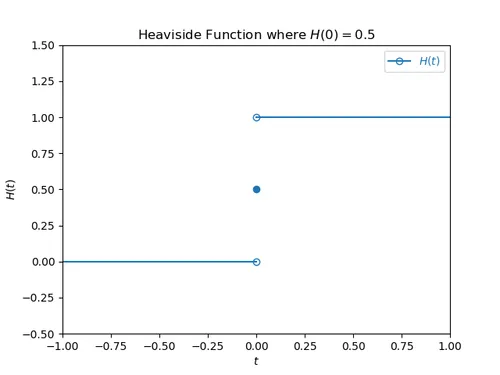

H(0) = 0.5

If the convention is used, the Heaviside function defined as:

This is the version of the Heaviside function which seems to be most often used. The Numpy documentation for numpy.heaviside() states that is often used. This format of the Heaviside is also closely related to the signum function (the function used to extract the sign of a real number), by the following equation (shifted up by 1 and then halved in magnitude):

Drawn on a graph it looks like:

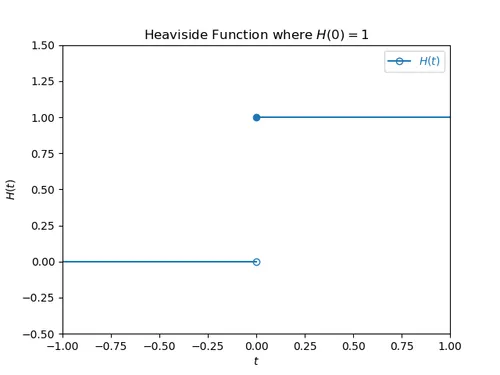

H(0) = 1

is how ISO 80000-2:2009 defines the Heaviside function. This version of the Heaviside function is right-continuous at but not left continuous. It also allows the Heaviside function to be defined as the integration of the unit impulse (Dirac delta) function:

Drawn on a graph it looks like:

The Heaviside function is usually defined like this when used in the context of cumulative distributions for statistical purposes.

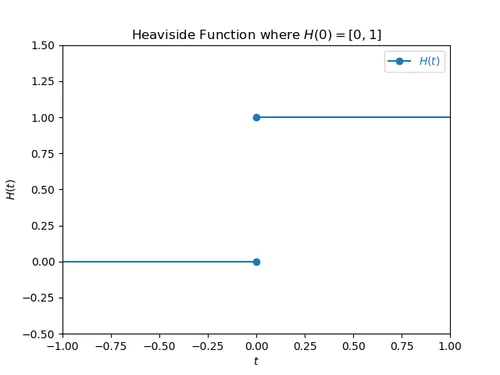

H(0) = [0, 1]

is when the Heaviside equals both and (a set) at . This approach is not as common, but is used in optimization and game theory.

Drawn on a graph it looks like:

Shifted Heaviside

TODO

Unit Impulse (Dirac Delta) Function

The unit-impulse function (known as a the Dirac delta function in the mathematical world) is defined as:

The unit-impulse function is everywhere except for where it is infinite.